|

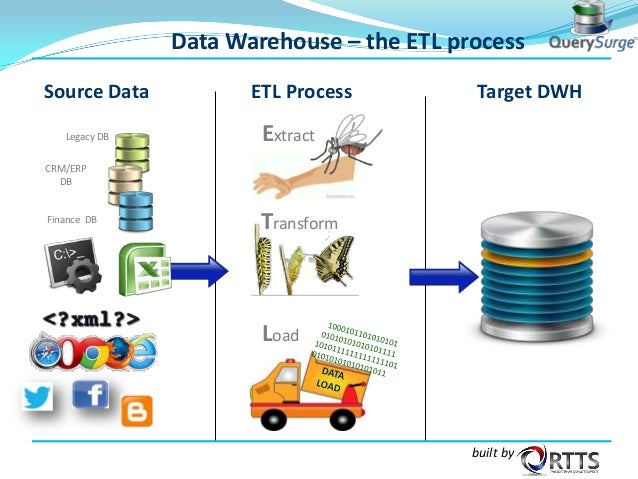

The ETL (Extract, Transform, Load) pipeline can be thought of as a series of processes that will extract data from sources, transform it and load it into a Data Warehouse, (On-premise or Cloud) database or data mart for analytics or other objectives. Kafka CDC and Oracle to Kafka CDC Methods What is an ETL Pipeline? This could include steps like moving raw data into a staging area and then transforming it, before loading it into tables on the destination. Modern data pipelines use automated CDC ETL tools like BryteFlow to automate the manual steps (read manual coding) required to transform and deliver updated data continually. When data is processed between any two points, think of a data pipeline existing between those two points. In some cases, the source and destination may be the same and the data pipeline may just serve to transform the data. You can think of a data pipeline as having 3 components: source, processing steps and destination. delivering transformed, optimized data that can be analyzed for business insights. The data pipeline has a sequence where each step will create an output which serves as the input for the next step and thus carrying on till the pipeline is completed, i.e. Data pipelines literally flow the data from sources like databases, applications, data lakes, IoT sensors to destinations like data warehouses, analytics databases, cloud platforms etc. 6 Reasons to Automate your Data PipelineĪ data pipeline is a sequence of tasks carried out to load data from a source to a destination.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed